Like a lot of people in the live events industry with nothing better to to, I dived head first into the Realtime virtual production world.

It’s been well over a year now since, as I reflect back on my experiences.

Virtual Production and Real-time graphics have been around for a long time, but the rapid increase in GPU power has fueled and exponential growth over the past few years.

Real time GPU rendering is not a new concept, it has been the backbone of live video effects, media servers and VJ software.

The sudden boom was a perfect storm created by 2 industry giants (Disney and Unreal Engine) combined with the sudden pause of live events leaving mountains of LED screens and technicians with nothing better to do.

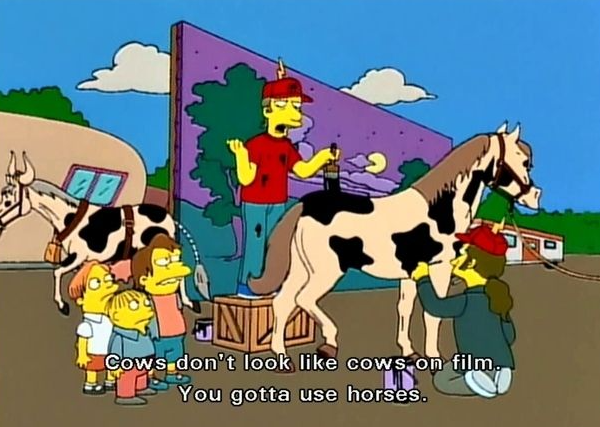

By now almost everyone has seen the behind the scenes videos from The Mandalorian and I'm sure anyone else who has actually worked in Unreal Engine will agree, the promotional videos are very well produced marketing videos, and the actual experience is far from the magic insinuated.

That’s not to say it’s not amazing, Unreal Engine is incredible and by far the leader in Real time realistic rendering and very close to magic.

But it doesn’t “just work” as the promotional videos make it appear, there is a lot involved to get it running smoothly and even then there will still be hiccups.

I think we have reached a strange tipping point where people’s expectations have exceeded current computers actual abilities.

Computers are light years ahead of their capabilities 10 years ago, but with all the attention around AI, machine learning and real-time, many people now assume Computers can perform any task instantly (especially those magical machines in the cloud that can produce answers in milliseconds) however that is far from true and in fact computing limitations are the only reason we have any security online.

Vast amounts of Computers mining Bitcoin are all trying to solve an algorithm to release blocks, the current estimates by today’s standards have the final block being calculated on 2140.

Apps showing of instant AI features which can change our face real-time have taken over our phones.

I think it is the wide spread access to these ground breaking technology which has tilted peoples perceptions to outweigh computing abilities.

Anyone working in the 3d rendering world will agree the new generation or render engine are game changing.

The problem is the people who have suddenly entered this space who have not had to suffer through hours of rendering for a couple of frames.

Many downloaded Unreal, ready to add it to their suite of video tools, already familiar with tools like final cut and after effect, so for many this was their expectation of Unreal as a software, and not understanding that Unreal is a development environment and not an end user application like traditional editors.

Unreal sits in a strange space where it does look and operate like a standard application and even offers a lot of traditional end user software features but this is all really just a pretty skin on top of a development environment.

And the process of getting a LED volume up and running is far deeper than just the application, it required finding ways to connect multiple applications running different tasks across multiple machines all working together in perfect sync to deliver every frame.

It was these common misconceptions that really made it difficult to properly explain to others what Unreal Engine actually is.

This put me in a strange place in my personal experience

Feel free to skip past my personal experience and check out my final thoughts.

I had been playing with Notch as a virtual set and real-time engine for a few years but Unreal had always been in the back of my mind, although I hadn’t really looked much into it as the thought of learning yet another system was daunting.

But when lockdowns became serious I was offered free reign of a large amount of LED screen, a studio space and some pretty powerful computers.

So along with a rag tag group of Live Events orphans we began playing with Unreal Engine, and surprisingly we got some good results very quickly.

We had Unreal driving the LED volume with some basic environments and camera tracking working with Vive VR system which we hacked to send position data to Unreal…

This was early on, Facebook hadn’t yet been flooded so we felt like we were on top of the world, we were solving problems rapidly and although the environments were basic everything was working in principle. It was an amazing proof of concept…

This is the honey moon period I’m sure many experience, where they get over the first hurdle of seeing Unreal as foreign new system to actually getting a sound understanding very quickly. Just before realising how deep of a rabbit hole Unreal actually is.

Very similar to the Dunning Kruger effect, but instead of another upswing, a much bumpier path after the initial peak.

Things slowed down quickly, and I’m sure many went through a similar form of Unreal depression after their initial high.

The big challenges were actually the easiest hurdles to cross, it was the ever growing amount of small challenges that made things seem more and more complicated. These small challenges were far less documented and much harder to find answers for — and quite often the answer we eventually got were things like:

- That’s a custom built feature

- part of a future release

- not compatible with our hardware

- no longer supported

- still in beta

- only works in a previous version

Once we initially got a proof of concept running a few people came to check out what we were playing with and this is where our own path really changed.

By 2 people in particular..

Someone who had spent decades in the film industry but never really found his place, he sees himself as a director, cinematographer, acting coach, and sound engineer….let’s call him Jack

And someone constantly trying to launch the next big start up, he knows all the tech buzz words and can name drop enough programming languages to sound like he knows something. With a track record of abandoned websites and marketing jargon and stock images. He seemed to have a lot of projects on the run, but it became apparent these were all the same. Name-dropping Quantum networking, python and skunk works, as long as he kept talking it gave the illusion of progress…. let’s call the man behind the next big tech start-up Mark….

So once these two got involved our dynamic changed.

Our open collaborative team was suddenly give direction, for some this was exciting. All of a sudden Mark showed up with a presentation about the new company we all were apparently now operating under and how things were going to be big, venture capital seed funding blah blah…

Mark mentioned at some point there would be NDAs imposed.. a very clever ploy on his part, by not actually imposing them as signed contracts but by alluding to them in the future he was able to get us to work under a shield of secrecy without any formal agreement which means he could slip out of any future threat of ownership or financially responsibility.

Jack treated this as his way to finally make it big in the film world he had spent most of his life struggling to find his place in. All of a sudden he was able to book meetings with big players who had never heard of him and they actually listened to him because he was involved in the next big thing.

These two became a toxic combination.

Mark saw Unreal as something he could white label as custom software and sell off as a proprietary system.

His technical understanding saw it as a programming language which you could write into a custom application.

Jack saw Unreal as something like After Effects a piece of software for film editing and chroma keying just with real time abilities and really expected it to just work and if there was a glitch you can just restart the program.

Unreal somewhere between these two, and doesn’t fit into either mind set.

Despite my attempts to explain this, neither were actually interested in Unreal Engine, I sent them count less videos and tried to show them. But “The Mandalorian” demo was all they needed to go out into the industry and pretend they knew what they were talking about.

Neither of the two ever took the time to look at how the system actually worked, yet believed themselves more than qualified to educate the industry.

We continued to work (for free) having access to these toys was still exciting.

And despite the frustrations of “upper management” we enjoyed working with each other and saw some potential in what we were doing.

But their constant pressure and outlandish promises really started to affect us.

They were booking a constant stream of industry demo’s and we would sit there in silence as they rattled on meaning less jargon and impossible promises.

They were lucky masks were mandatory as none of us could keep a poker face through their insane promises and gross misunderstanding of the technology:

- you can be any where in the world instantly

- you want Paris we could give you Paris in seconds

- this is the end of green screen

- we’ve done the math, this saves productions 75%

- you can shoot a whole feature film here

- you don’t need lighting anymore it all comes from the screens

- you can buy any environment you need from the market place and create a full world with out the usual team of 3d artists

They really didn’t understand the limitations, and they were constantly pushing to have all the features shown of in the Mandalorian promos (a lot of which at the time was not public release)

Given all these promises of leading edge technology we were still running on a shoestring with hacked together tech and not using proper tracking or media servers.

We were under enormous pressure, and constantly trying to meet expectations. We built demo environments, but as we upgraded and add features some would no longer work or certain functions would break.

They expected us to be constantly developing to meet their expectations whilst remain able to perform an industry demonstration at a moments notice.

And if they looked foolish because in front of their clients the system would crash or take too long to load, or the mountain we could move on cue last week wasn’t working, they would blame us and tell the clients “sorry this never happens”.

YES! yes it does — I would tell them after, and try to convince them that such promises would cause us major problems if anything amounted to a real paying project. I was terrified that we may actually get a real job under such promises, and I would be left alone trying to load the system with a full cast and crew all on the clock on waiting for me.

Mark knew a bit about software development and didn’t understand why we weren’t working in a traditional dev style build/production environment.

We tried to explain that wasn’t as simple as software development, we had multiple machines networked to work in unison and tracking, everything was just holding together and as we tried to progress we had to change networking and how systems linked, where files were stored. There was no way to ensure you project from last week would always work.

Jack didn’t understand the amount of work involved in creating worlds.

He would come in with his camera and want to spend the day shooting ad-hoc.

- Let’s explore and find somewhere to shoot

- give me another world maybe something with snow

- make the cars drive

- make it look like I’m riding a motorbike

- give me a virtual person to interact with

He clearly didn’t understand the fundamentals of VFX and the size of the teams usually involved creating environments for movies. Let alone that those aren’t full environment you can just ‘explore’ and find some where nice to shoot.

I tried to explain that it takes time you can’t just “snap your fingers and go to Paris”.

He would then say, OK I’ll give you a list of places and we can try them tomorrow.

It was a sickening feeling and we felt like frauds pretending to be VFX experts in a world we had just stumbled into.

I found myself trying to explain VFX fundamentals to someone who was in the industry and really should know more than me.

We had lost our way and abandoned our core values, we were a small team of tech lovers with time on our hands who had some how ended up working for free on a project we didn’t really believe in and weren’t even allowed to talk about it, we couldn’t share any footage and so we were also isolated from the community who were openly working in this field too

At one point we had the opportunity to talk with the Unreal team, this was very exciting we had questions and this was a huge opportunity. This meeting was hijacked by Jack and Mark who big noted themselves and tried to tell Unreal team how things should be. We missed our chance at a dialogue and it was clear from their expressions they didn’t take us seriously.

This was our only meeting and we blew any chance of collaboration…

This didn’t stop Jack and Mark, they now had more spin as they now told prospective clients:

- They were in constant communication with Unreal having weekly meetings with their developers

- That they were now writing the handbook on Virtual Production for Unreal

- That Unreal was directly backing this project

As other teams began showing up, we would have loved to have the chance to share knowledges and learn from them too. However we weren’t allowed to talk, and the threat of competition increased their pressure on us and their stories became more and more far fetched.

Eventually our team fizzled out as some real events started coming back as restrictions eased and focus shifted to getting back to work.

We did make a few interesting projects in that time, but the Jack And Marks dream empire faded away in part I believe because they had actually accumulated some financial responsibility along the way they wished to avoid.

Whilst the team has mostly disbanded, the test studio continues to function and has completed several real projects.

The gold rush around virtual production has eased, as it has become apparent things aren't quite as easy as they appear.

Apologies as this got rather personal and shifted from the overall experience of Virtual Production.

So despite the frustration in the end I am still grateful for the experience, I learned a lot and met some very talented people along the way, whom I will continue to find new and exciting ways to work with.

Whilst I didn’t agree with the direction I do need to appreciate that with out being given targets and dead lines and just left to play most likely would have achieved far less.

Having a project which gave us purpose during lockdown was of tremendous value, being distracted and challenged and that was welcome distraction. For that part I am truly appreciative of the opportunity the LED supplier and venue provided, which on their part still came with very real costs.

And with that I’ll leave you with my:

Final Thoughts

The evolution of real-time rendering in Virtual production is an exciting new tool to be added to the film industry.

Virtual Production is not new, but has become far more sophisticated in the past few years.

However there is still a lot to consider. It can be very expensive, in actual hardware costs and flow on costs as a result of changes in the way a production is created.

Change of workflow

VFX for traditional green screen is a post processes, usually close to the final steps in production. In that work flow creative decisions can still be made along the way and budgets can be flexible, scenes are often blocked out and tested in low quality before going through the full realistic treatment. Changes can be made up until the last minute (almost).

This has been evident in many films where the footage was shot but budget ran out when it came to post VFX.

LED volumes flip this workflow, all background layer effects need to be in finally quality and completed as they are shot in camera and will then be near impossible to edit. (or far more expensive than green screen).

This means VFX is now paid for upfront and cannot work on a sliding scale. Every decision needs to be finalised before shooting, (the spaceship can’t be designed later its been shot already)

A lot more work needs to be done before the first actor steps in front of a camera.

Also, the system doesn’t ”just work”.

There is a lot going on under the hood, which means there is a lot to go wrong, small changes can have huge consequences to the overall stability.

This is a very big single point of failure, so road testing is extremely important.

Having the entire production on standby whilst the system reboots or whist you are trying to figure out why the last small change broke everything….

Makes sure you have tested as many scenarios as possible, and extra day of pre production is surely far cheaper than a whole crew running overtime.

LED Volume Lighting

LED screens are incredible sources of light.

However are not suitable as a stand alone lighting source. They really only provide a diffused sky box, which creates some nice reflections and environment lighting, but you will still need traditional lighting especially for anything focused. Realistic lighting doesn't always look right on camera, especially when shooting at high speed.

The perfect LED volume

There is no one size fits all LED volumes need to be designed for the production.

Like the rest of a production schedule, the volume needs to carefully considered to accommodate the required angles, paths and access.

Building the virtual world

Traditional set building should still come into play even in the digital space. Like lighting, often working exact to real world specifications often doesn’t look right. Objects need to be scaled or coloured differently to suit.

It is far more important that things ‘look right’ in camera, and this can be hard to see until you have the whole setup running with actors in place.

Video game environments are designed for monitors and not camera lenses, they also aren’t designed for running at multiples of 4k and generally have a ton of unnecessary props.

Only build what you need, if you are shooting in a standards box set just make the 3 walls, there is no need to have the computer work on processing the whole apartment building if its not in shot.

Being immersed in the environment

There are huge benefits to LED volumes,

The actors get a better feeling of the environment, it much easier to ‘believe’ you are in the middle of a city, a jungle or space when the world surrounds you. Rather than trying to imagine inside a green box.

However it is important to note the screens don’t actually display correct positioning from the actors point of view as the images are rendered as a forced perspective from for the camera. (similar to those street art illusions that only look right from one spot) and the space only has 2d walls to work with, so eye-lines generally do not translate correctly in camera, the tennis ball is still a better point of reference.

I spent whole days inside the volume, and it is exhausting being surrounded by that much light and heat confined to a box with limited air flow. This is a huge factor which needs to be considered especially for action scenes as talent and crew can become fatigued far quicker.

Industry adoption

It surprised me at first how much an industry often caught up I nostalgia and hesitant to the digital take over, openly embraced this.

The interesting thing is all this cutting edge technology actually enables film makers take a step backward to traditional techniques and even shoot on film.

Compared to greenscreen, cinematographers really get to go back to the full potential of their craft as the shot the scene in camera and don’t need to ‘imagine’ the missing elements. Using lens tracking they can pull focus and have to virtual world respond accordingly, this allows in the moment creativity and experimentation not possible with green screen.

The end of green screen?

The term Virtual Production actually still includes green screen, and this is often a better system where shooting can take place on a green screen with the benefits of real-time rendering to preview the final scene.

Camera and lens tracking data can then be used in post with the full flexibility of green screen to continue to make changes.

This is a much cheaper alternative and doesn’t require anywhere near the computing power required to drive an LED volume — as only the cameras resolution need to be rendered and not all the pixels of an LED wall.

Major development in AI, Machine learning and depth sensing are improving real-time keying of green screens and even keying without clean screens.

In comparison the main benefits of LED volumes are the lighting and reflections and complex keying situations like hair and transparent elements.

Green screen is still a key component and not going anytime soon, LED is just a new tool added to the Virtual Production family.

Unreal Engine in the broader Entertainment industry

I think Unreal will continue to shake up our industry in other ways.

It is by far the Gold standard when it comes to realistic rendering and for a good reason, as this giant expands out of the gaming world it acquires talent and resources.

It is already being used for content in live events as a render node in media servers like Disguise, Smode and Pixera.

There are huge benefits to a cross industry platform.

Similar systems like Notch and Touchdesigner are amazing, but very difficult to find creators and come with some form of cost of entry. (Not for the software but to actually use in production)

There is a constant stream of Unreal creators and a growing marketplace, having creators able to swap between gaming, film and live events.

The video side of our industry is obviously impacted and has been closely linked to development in the gaming world for a long time. I don't think we will see Unreal making ‘Waves’ I’m the audio world any time soon, but lighting is already seeing Unreal move in with the implementation of DMX now included with the official build.

And Unreal is already affecting is CAD and lighting visualisation.

The main software in this industry is very expensive and rightly so, there is a lot of work that goes into accurately simulating lighting.

But Unreal trumps what is currently available, and a lot of people have already started building their own for lighting and video simulations.

The small teams behind currently developing visualisation render engines have nowhere near the resources of Unreal.

As real-time visualisation moves from what used to be a very niche industry and joins the more mainstream gaming world.

I think we will see either some of the current providers switching or a few new being created based on Unreals Engine.

And with them no longer having to develop their own render engine they can focus their attention on the user experience.

Unreals marketplace and ecosystem also means lighting fixture manufactures could build their products as proprietary assets shared amongst all Unreal based pre vis.

It would now be in the manufactures interest to provide detailed render models as they would only need to do this once rather than a different one per software, it would be in their interest as it could help market their product (you would be more inclined to buy a fixture which you can simulate well offline).

Only having to develop for one marketplace, with the peace of mind of IP security they could be far more accurate and start to include features they wouldn’t usually share, including proprietary fixture features like proper macro simulations.

Discussion (0)