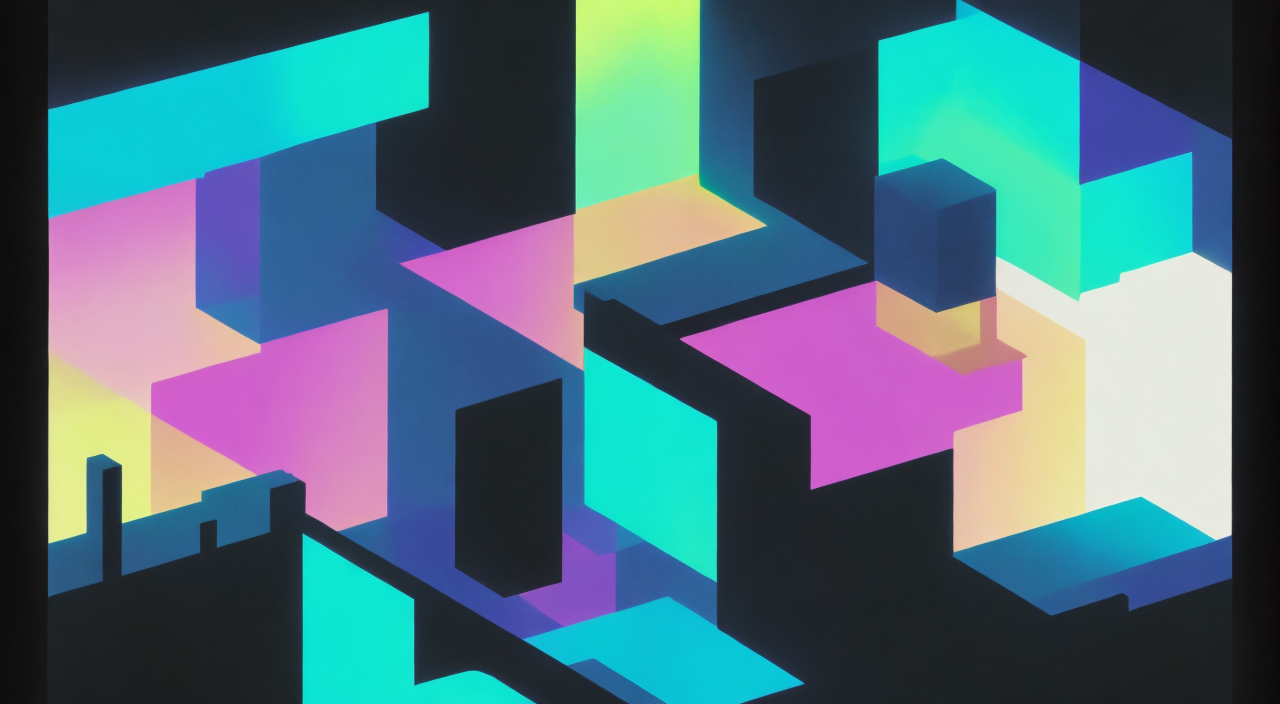

Hi everyone, I recently completed an audio-reactive animation using stable diffusion for a course project.

I'm working on a colab notebook putting together all the tools I used if you want to check it out and use it yourself. (feedback would be much appreciated as it's my first time making something like this for others)

There are two main parts to this, audio feature extraction, and prompt interpolation.

To extract audio features I used some signal processing tools in python and manually separated out different components of the music I selected. I then did some smoothing and other post-processing that I felt was necessary to generate my desired effects. Given N audio features, you end up with a coefficient c between 0 and 1 for each N audio features at all M timepoints in the music.

For prompt interpolation I would start at an initial prompt, get the conditioning for this prompt, and then loop through all N audio features and travel towards the conditioning for their corresponding prompts one by one with the distance determined by the coefficient of the audio feature.

You then use the resulting conditioning as the initial conditioning of the diffusion model for that frame.

The other part, which is much more subjective, is determining what prompts to use in the first place, I probably spent the most time on this in total, and it's quite a bit of trial and error finding prompts that interpolate between each other nicely when working with several prompts at once.

I also made use of img2img but used modified noisy images to produce some more subtle effects, like the pseudo-symmetry and build up in complexity in the first 45 seconds.

If you have any questions feel free to ask!

Discussion (2)

It’s so much fun diving into this !!

Cool project, I def need to try this.

Also it could be quite a dope plugin for automatic1111 local SD install.