Using VJ performance techniques to generate a storyboard for an animated and partially audio triggered 3D music video.

TREATMENT:

TLE is an audio/visual experience realised completely using only 3D animation; with two main objectives in mind:

The first being the experimentation with (using) audio waveforms to manipulate and drive the behaviour of characters (objects), lighting and a plethora of 3D parameters.

The second, unconventionally at the time, no editing in Post - at all! Which was achieve by animating sequentially from frame 00:00; rather than animating scenes at random, so that the final rendered frames could be loaded into a disk recorder then layed down along with audio for mastering to tape.

As the primary design approach, the musical track itself - its combined instruments and sophisticated rhythms, timbre (texture) and the actual shape of the audio waveform became the basic building blocks to decipher an abstract visual plot.

With the aid of a (then) recently developed audio-control 3D plug-in, coupled with traditional animation / modelling and the evolving vj performance techniques. A modality for transposing sound to vision emerged to help achieve the rhythmic and unified composition.

Some of the 3D animation attributes that are audio driven are:

Camera - lense size or perspective and dolly.

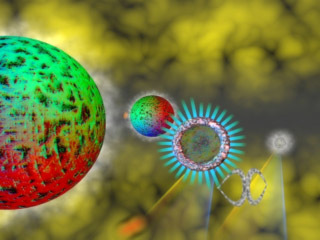

Geometry - symmetric complexity, shape, and scale.

Texture - colour level, generative texture, bump, specular and diffusion.

Lighting - colour & intensity, animation maps and cone scale.

Volumetric fog - noise detail and layer height.

Paths - deformation of vertices and travel speed used for camera, objects, lights and particles.

By using the VJ methodology employed used to transpose animation from music, the palette of dynamic audio responsive attributes were merged to generate the rhythmic visual (Tiny Little) Engines.

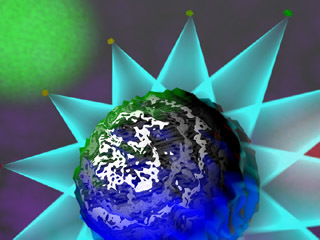

The camera i.e. the viewer are moved via undulating pathways of loops and vortices which pass through the orchestration of shape and colour as the little Engines journey unfolds.

FINAL TRANSPOSITION:

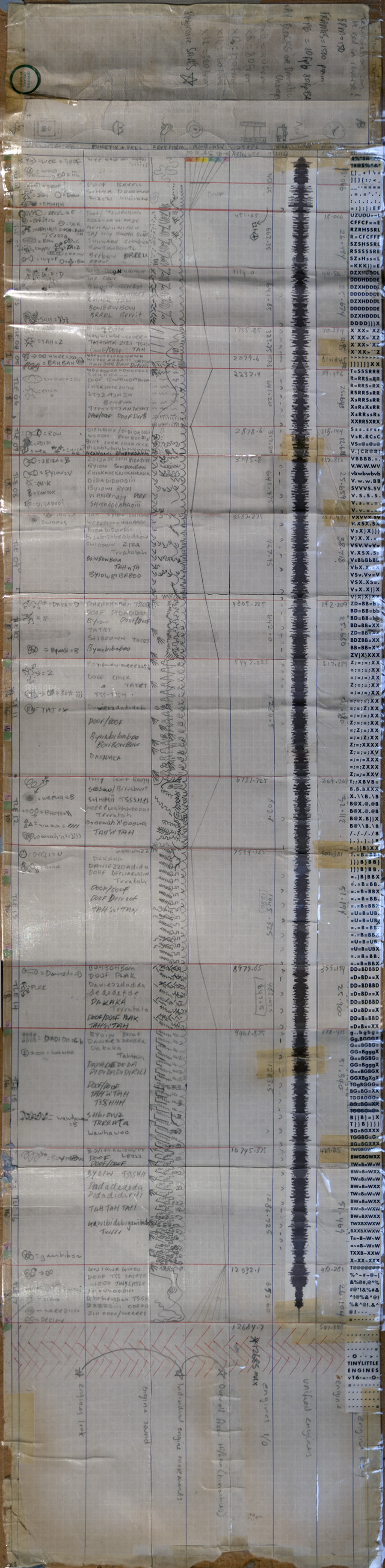

When the audio waveforms were not, or could not be driving 3D or atmospheric elements and their parameters. A system of transposition used to generate a master crib sheet (aka storyboard) was employed for TLE.

This system was developed using VJ performance techniques to notate the sounds ‘gesturally’ in an abstract and freeform way. The gestures then inform the (manual) animation of the 3D elements.

This allowed a somewhat seamless integration of audio and non-audio driven components.

One such example can be seen where orbs with spot lights beaming change to tempo of music. This was realised by vj’ing 2D images using Elan Performer (on an Amiga 1200) live into a disk recorder. Then those frames were applied as projection maps along with audio driven volumetric fog to the orb beams (spot lights), so they animate in time during final render.

Brief descriptions of the Final Transposition tracks are as follows; referring to the vertical tracks shown in image above RIGHT to LEFT:

1) The primary and multi-purpose ABC track contains letters tapped into a text document as a ‘Master Visual Reference’ to different instrument sounds and rhythms. Use of alpha-numeric keys, each unique character representing a single beat at 8 beats per line (1 Bar), with Bars of 2, 4, 8, 10, 12, or 16 representing scenes. In this case fortunately the music was 150bpm, which calculated to exactly 10fr per beat, which made animating at 25fps a much simpler translation process!

2+5) Final animation and music timings are displayed in the FPS and MIN-SEC tracks. All timings were made listening to a cassette recording of track - over and over again!

8+9) The look and feel of translated objects and shape of sounds are shown in the FONETIX - how the sounds could be primitively shaped by mouth gestures. And VISUALIZE tracks - gestural icons drawn in a flow-state to represent sound as (3D) objects.

3+7) The shape and style of object animations for each scene were realised using gestural strokes are shown in the FREEFORM and loosely inspired by the WAVE form tracks.

4) Background colour (and rhythm) are shown in the XYZ track. Noting distinct bumps and flows as track progresses.

6) Background and character colour schemes assigned for the flow/feel are documented in the RGB-HSV track. To keep the human element of creative perception and interpretation intact, the colours selected reflect the tonal values temporally throughout track as perceived by ear – not as a mathematical translation.

10+11) References for 3D scene numbers and file names are shown in the last two tracks.

PRESS

PRODUCTION CREDITS:

DESIGN & ANIMATION:

Adem Jaffers

c1998

PRODUCTION FACILITY:

Unreal Pictures

Inkind support!

ONLINE FACILITY:

Icon Digital & Comcopy

ONLINE OPERATOR:

Mike Swift

MUSIC WRITTEN & PRODUCED BY:

The Visitors - Andrew Till, Ollie Olsen & Geoffrey Hales

PUBLISHED BY:

Psy-Music / PolyGram Music

Taken from the CD Psy-033 (Psy-Harmonics Volume 3)

"Hacking the Reality Myth"

Psy-Harmonics - Australia

PRODUCTION STATISTICS:

- 3D software - 3dsMax

- Grading - Digital Fusion

- Disk Playback - DPS Perception

- Network Render Engine - 966mhz

- Render time per frame - 8:24sec

- Animation Duration - 8:30sec

- Total frames - 12,685fr

- Database size - 5.2gig

- Production duration - 22mnths

- Hardware Platform - WinNTv4

SPECIAL THANX TO:

David Nelson & the Unreal Pictures crew, Psy-Harmincs crew, James Murray & the Creative Access crew, my mum, dad, Jeff jaffers, Zenep Jaffers, and Melanie Carr.

Discussion (0)